Equipment

VICON

This motion-capture system comprises a set cameras [Fig. 1(a)] that are calibrated to detect reflective markers placed in the environment. Using the location of the markers in the images, as well as the cameras’ extrinsic calibration parameters, the VICON can determine the position and attitude of a moving object with sub-centimeter and sub-degree accuracy. We use this motion-capture system to provide groundtruth for testing the accuracy of our 3D localization and mapping algorithms, as well as for developing and testing agile controllers for autonomous quadrotors.

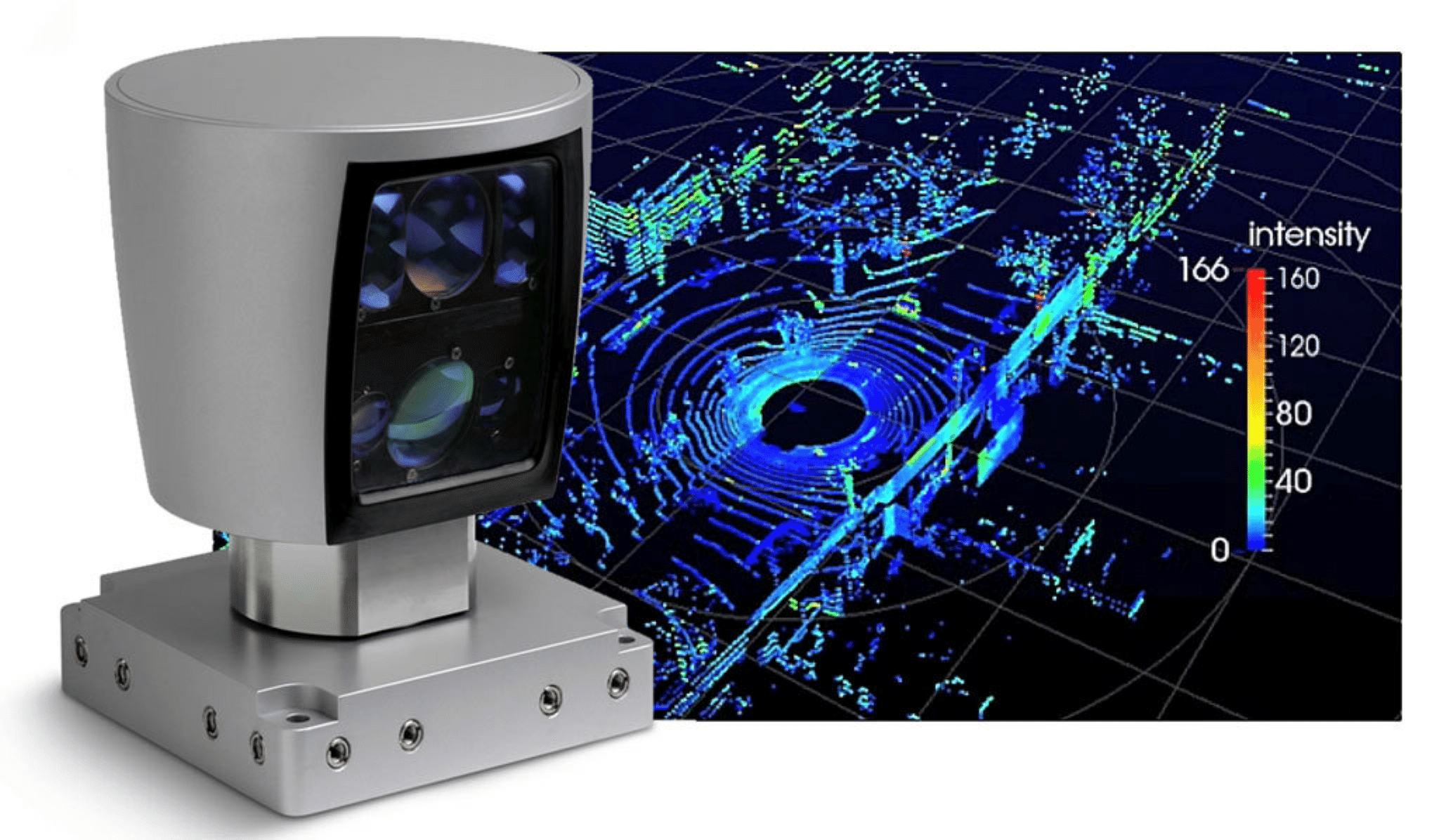

Velodyne’s HDL-64E

This lidar sensor [Fig. 1(b)] uses 64 spinning laser beams, spaced at different elevation angles, to measure the distance to its surroundings. The resulting 3D point cloud (1.2 million points per second), is used as part of a inertial system for performing large-scale outdoor mapping.

Figure 1: (a) VICON camera (Photo courtesy of VICON) (b) Velodyne’s HDL-64E Lidar with sample pointcloud (Photo courtesy of Velodyne)

Ultimaker 2

This 3D printer [Fig. 2] allows us to create custom mounting parts, at 60 microns resolution, necessary when designing different navigation-payload configurations.

Figure 2: Ultimaker 2 3D printer (Photo courtesy of Ultimaker)