Sensors Calibration and Alignment

- Summary

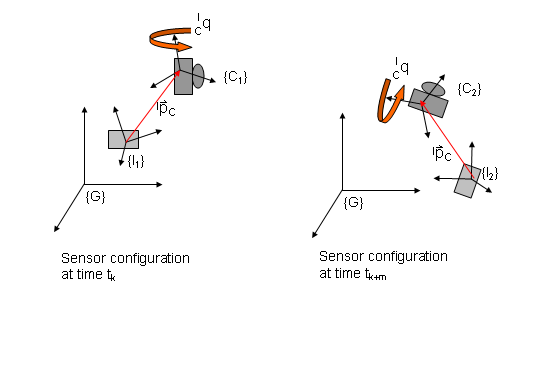

State estimation of autonomous ground and aerial vehicles is based on processing of measurements from a network of sensors distributed across the body of the robot. In order to optimally combine kinetic information from a set of sensors, accurate estimates of their relative position and orientation must be known. Often, sensor alignment is performed either by directly measuring, using a tape, the relative poses of the sensors or by employing a third device, such as a laser scanner, that measures the poses of all sensors with respect to a ground frame of reference. In both cases the accuracy of the derived estimates is limited by that of the measuring device. Misalignment errors account for the main portion of the systematic errors in the estimation process and cannot be filtered out during motion. In addition, sensor alignment is a tedious and time consuming process that has to be manually repeated before every experiment. We design and implement automated self-alignment algorithms that do not rely on external measurements. Instead they are based on measurements from the sensors on board the vehicle and provide precise relative pose estimates during the initial alignment phase.