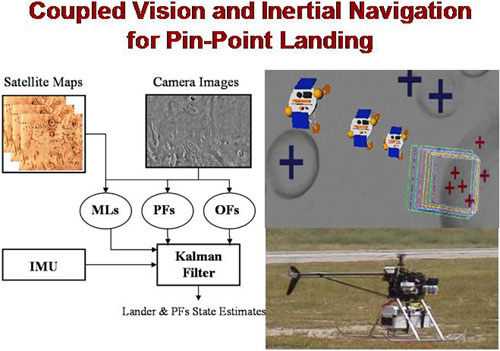

Coupled Vision and Inertial Navigation for Pin-point Landing

- Dr. Andrew Johnson (co-PI), Dr. James Montgomery (co-PI)

-

his work focuses on precise state estimation for pinpoint landing of spacecraft. We are investigating and developing real-time estimation techniques for aiding inertial navigation with image-based estimates for the motion and pose of the spacecraft. More specifically, we are designing and implementing an Extended Kalman Filter (EKF) estimator that will process inertial measurements of acceleration and rotational velocity, provided by an Inertial Measurement Unit (IMU), and images of visual features on the surface of the planet such as craters, ridges, and mountain peaks, acquired during the EDL phase.

Employing previous knowledge of their location and our ability to autonomously detect and track them through sets of images, features on the surface of a planet can be categorized as: (1) Mapped Landmarks (ML) whose positions have already been determined in planet-based coordinates from satellite imagery, (2) Persistent Features (PF) which are distinct scale-invariant visual features that appear throughout sequences of images (these correspond to surface characteristics difficult to accurately model and consistently match to known features in satellite images), or (3) Opportunistic Features (OF) that appear and can be reliably tracked only between pairs of images.

The treatment of the visual-feature measurements within the EKF estimator will differ according to their characteristics and information content. In particular, sets of at least three MLs provide measurements that support direct estimation of all degrees of freedom for the pose of the spacecraft. Depending on the number of mapped features within the field of view of the camera, these will be used to derive estimates of the state of the spacecraft, or impose constraints on combinations of them.

This form of aided inertial navigation will allow for precise state estimation for most of the EDL phase, provided that the projection of the MLs is within the image plane. During the later phase of EDL, when approaching the surface of the planet, the role of the PFs and OFs will become more prominent. Specifically, the position of the PFs will be included in the state vector and estimated in real-time once their images become available to the EKF. Information from subsequent observations of PFs will be used to correct for estimation errors from the noise in the inertial measurements. Finally, in order to increase robustness and achieve real-time performance, OFs will be treated separately and only the inferred estimates of spacecraft pose displacement will be provided to the EKF estimator.

Previous results in related work are being leveraged by this effort, and all algorithms are being integrated and tested on an autonomous helicopter platform. We anticipate that this effort will result in a new class of estimation algorithms that will allow precise navigation through different phases of EDL and provide pinpoint landing capabilities.

- Pin-Point Landing based on Mapped Landmark Observations

- Vision-Aided Inertial Navigation

- Sensor Calibration and Alignment

- C5. N. Trawny, A.I. Mourikis, S.I. Roumeliotis, A.E. Johnson, and J.F. Montgomery, "Vision-Aided Inertial Navigation for Pin-Point Landing using Observations of Mapped Landmarks", Journal of Field Robotics, 24(5), May 2007, pp. 257-378. (pdf)

- C4. J.F. Montgomery, A.E. Johnson, S.I. Roumeliotis, and L.H. Matthies , "The Jet Propulsion Laboratory Autonomous Helicopter Testbed: A Platform for Planetary Exploration Technology Research and Development", Journal of Field Robotics, 23(3/4), March/April 2006, pp. 245-267. (pdf)

- C3. A.I. Mourikis, N. Trawny, S.I. Roumeliotis, A. Johnson, and L. Matthies, "Vision-Aided Inertial Navigation for Precise Planetary Landing: Analysis and Experiments", In Proc. Robotics: Science and Systems Conference (RSS'07), Atlanta, GA, June 27-30, 2007.

- C2. A. Johnson, A. Ansar, L. Matthies, N. Trawny, A.I. Mourikis, and S.I. Roumeliotis, "A General Approach to Terrain Relative Navigation for Planetary Landing", In Proc. 2007 AIAA Infotech at Aerospace Conference, Rohnert Park, CA, May 7-10.

- C1. N. Trawny and S.I. Roumeliotis, "A Unified Framework for Nearby and Distant Landmarks in Bearing-Only SLAM," In Proc. 2006 IEEE International Conference on Robotics and Automation, Orlando, FL, May 15-19, pp. 1923-29 (pdf).